The core irony of generative AIs is that AIs were supposed to be all logic and no imagination. Instead we get AIs that make up information, engage in (seemingly) emotional discussions, and which are intensely creative. And that last fact is one that makes many people deeply uncomfortable.

To be clear, there is no one definition of creativity, but researchers have developed a number of flawed tests that are widely used to measure the ability of humans to come up with diverse and meaningful ideas. The fact that these tests were flawed wasn’t that big a deal until, suddenly, AIs were able to pass all of them. But now, GPT-4 beats 91% of humans on the a variation of the Alternative Uses Test for creativity and exceeds 99% of people on the Torrance Tests of Creative Thinking . We are running out of creativity tests that AIs cannot ace.

While these psychological tests are interesting, applying human tests to AI can be challenging. There is always the chance that the AI has previously been exposed to the results of similar tests and is just repeating answers (although the researchers in these studies have taken steps to minimize that risk). And, of course, psychological testing is not necessarily proof that AI can actually come up with useful ideas in the real world.

Yet, over the past couple of weeks, we have learned from three new experimental papers that AI really can be creative in settings with real-world implications. I want to briefly discuss these papers, and then give some practical tips on how to use AI for idea generation based on their results.

AI is creative in practice

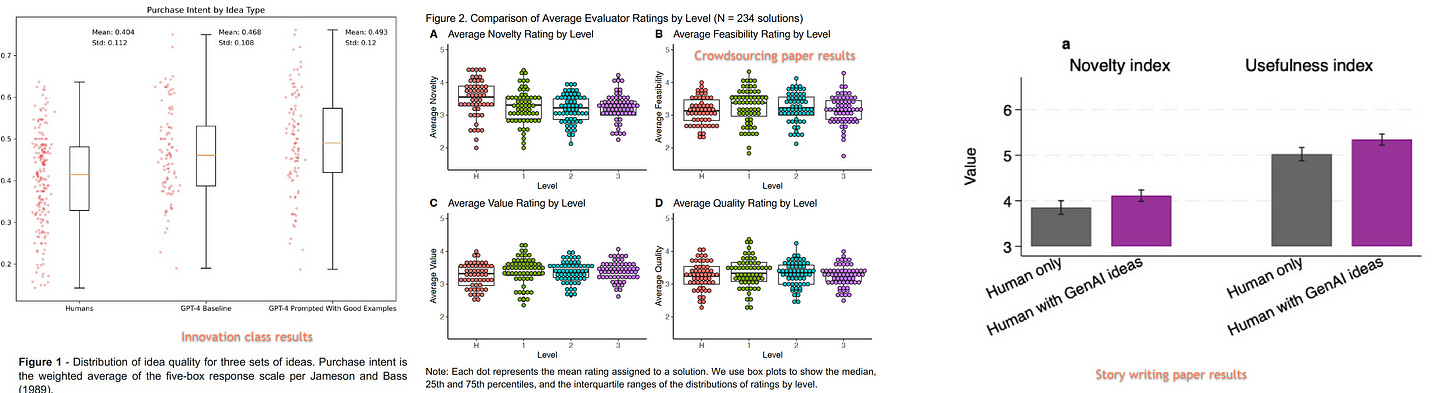

Each of the three papers directly compares AI-powered creativity and human creative effort in controlled experiments. The first major paper is from my colleagues at Wharton. They staged an idea generation contest: pitting ChatGPT-4 against the students in a popular innovation class that has historically led to many startups. The researchers — Karan Girotra, Lennart Meincke, Christian Terwiesch, and Karl Ulrich — used human judges to assess idea quality, and found that ChatGPT-4 generated more, cheaper and better ideas than the students. Even more impressive, from a business perspective, was that the purchase intent from outside judges was higher for the AI-generated ideas as well! Of the 40 best ideas rated by the judges, 35 came from ChatGPT.

A second paper conducted a wide-ranging crowdsourcing contest , asking people to come up with business ideas based on reusing, recycling, or sharing products as part of the circular economy. The researchers (Léonard Boussioux, Jacqueline N. Lane, Miaomiao Zhang, Vladimir Jacimovic, and Karim R. Lakhani) then had judges rate those ideas, and compared them to the ones generated by GPT-4. The overall quality level of the AI and human-generated ideas were similar, but the AI was judged to be better on feasibility and impact, while the humans generated more novel ideas.

The final paper did something a bit different, focusing on creative writing ideas, rather than business ideas. The study by Anil R. Doshi and Oliver P. Hauser compared humans working alone to write short stories to humans who used AI to suggest 3-5 possible topics . Again, the AI proved helpful: humans with AI help created stories that were judged as significantly more novel and more interesting than those written by humans alone. There were, however, two interesting caveats. First, the most creative people were helped least by the AI, and AI ideas were generally judged to be more similar to each other than ideas generated by people. Though again, this was using AI purely for generating a small set of ideas, not for writing tasks.

Key graphs comparing human to AI generated ideas from all three papers

So what does this mean? Reading these studies, it seems like there are a few clear conclusions:

- AI can generate creative ideas in real-life, practical situations. It can also help people generate better ideas.

- The ideas AI generates are better than what most people can come up with, but very creative people will beat the AI (at least for now), and may benefit less from using AI to generate ideas

- There is more underlying similarity in the ideas that the current generation of AIs produce than among ideas generated by a large number of humans

All of this suggests that humans still have a large role to play in innovation… but that they would be foolish not to include AI in that process, especially if they don’t consider themselves highly creative. So, how should we use AI to help generate ideas? Fortunately, the papers, and other research on innovation, have some good suggestions.

Prompting for Ideas

People get very hung up on the idea that you have to be great at prompting AIs with specific wording to get them to accomplish anything. But this just doesn’t seem to be the case in idea generation. In the paper comparing AI to crowdsourcing, the authors tested three kinds of prompts: basic ones that stated the problem, more advanced ones that gave the AI a persona to be more like a human solver (“You are a Technical and Creative Professional, located in Europe.”), and a very advanced one that asked the AI to take the perspective of particular famous experts. While there were some differences between these groups, no one approach clearly dominated. So I wouldn’t worry too much about the exact wording of the prompt, you can experiment to see what might work best.

Truthfully, simple prompts seemed to work fine. The paper on innovation contests, for example, provided a simple system prompt as context: You are a creative entrepreneur looking to generate new product ideas. The product will target college students in the United States. It should be a physical good, not a service or software. I’d like a product that could be sold at a retail price of less than about USD 50. The ideas are just ideas. The product need not yet exist, nor may it necessarily be clearly feasible. Number all ideas and give them a name. The name and idea are separated by a colon. And also provided a second user prompt: Please generate ten ideas as ten separate paragraphs. The idea should be expressed as a paragraph of 40-80 words. They repeated this process several times, because generating a lot of ideas is useful.

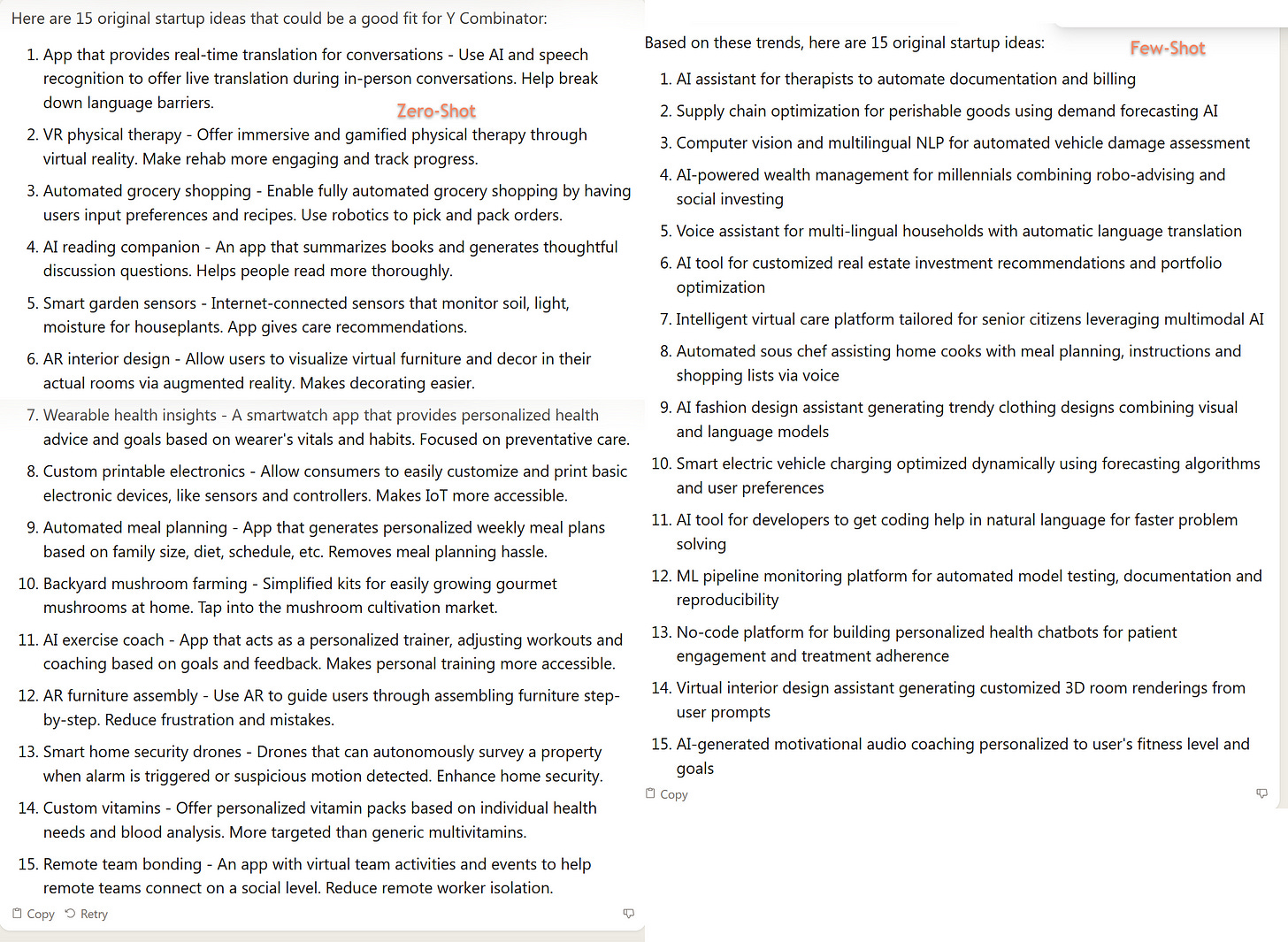

They also compared the value of this GPT-4 prompt to one using few-shot learning. Few-shot learning is easy to do - you simply provide the AI with examples of the kind of results you would like to see (the “few shots” rather than “zero shot” learning, where you provide no examples) before you ask it to generate ideas. While the AI generated more and better ideas with few-shot approaches, the difference was not statistically significant. At the same time, I generally would still suggest using few-shot techniques because they seem to help subjectively, other research has found them valuable, and they are easy to implement.

As an example of the difference, I asked Claude 2 to generate 15 original startup ideas that would be a good fit for Y Combinator (the famous accelerator). This was a zero-shot approach. Then I tried a few-shot method where I gave the AI a list of 400 recent Y Combinator startups, with one-sentence descriptions of each, and prompted: Here are 400 of the latest startup ideas from Y Combinator. Come up with trends, then generate 15 original ideas combining these concepts. You can see the difference, and why I preferred the few-shot approach.

Outside of these suggestions, I have a couple of my own. First, don’t just ask the AI to generate ideas, use constraints as well. In general, and contrary to what most people expect, AI works best to generate ideas when it is most constrained (as do humans!). Force it to give you less likely answers, and you are going to find more original combinations, which may solve the originality problem. You might want to ask: You are an expert at problem solving and idea generation. When asked to solve a problem you come up with novel and creative ideas. Here is your first task: tell me 10 detailed ways an AI**** (or a superhero, or an astronaut, or any other odd profession) might do _____. Describe the details of each way.

You can also use other techniques that take advantage of the ways that AI can hallucinate plausible, but interesting, material, and use that as a seed of creativity. Consider asking it for interview transcripts for fake interviews: Create an interview transcript between a product designer and a dentist about the problems the dentist has, for example. Or ask it to describe non-existent products: walk me through the interface for a fictional new water pump that has exciting new features. There is an art to this that you can learn from experimentation, and you should feel free to share other prompt techniques that work in the comments.

AI as creative engine

We still don’t know how original AIs actually can be, and I often see people argue that LLMs cannot generate any new ideas. To me, it is increasingly clear that this is not true, at least in a practical, rather than philosophical, sense. In the real world, most new ideas do not come from the ether; they are based on combinations existing concepts, which is why innovation scholars have long pointed to the importance of recombination in generating ideas. And LLMs are very good at this, acting as connection machines between unexpected concepts. They are trained by generating relationships between tokens that may seem unrelated to humans but represent some deeper connections. Add in the randomness that comes with AI output, and the result is, effectively, a powerful creative ability.

In the most practical sense, we are now much less limited by ideas than ever before. Even people who don’t consider themselves creative now have access to a machine that will generate innovative concepts that beat those of most humans (though not the most creative ones). Where previously, there were only a few people who had the ability to come up with good ideas, now there are many. This is an astonishing change in the landscape of human creativity, and one that likely makes execution, not raw creativity, a more distinguishing factor for future innovations.